The Internet of Things promises to connect virtually all devices and make sensor data ubiquitously available. Instead of storing all this data in a central location, you can use a peer-to-peer network to store sensor data. Using a peer-to-peer network has several advantages. First of all, the infrastructure is ‘free’ (although in most peer-to-peer networks you also have to participate in storing some of the data). Second, it is redundant (no single point of failure) and not controlled or controllable by a single entity. Third, it can be used to anonymously exchange data, and (as we will see) control who can see what data.

As it turns out, it is possible to use the peer-to-peer infrastructure used by BitTorrent to reliably store and retrieve IoT sensor data.

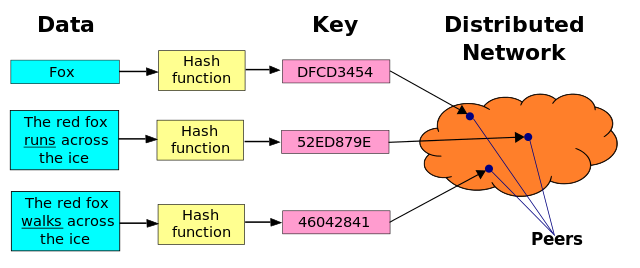

BitTorrent makes use of a so-called distributed hash table (DHT) to locate other BitTorrent nodes that want to share the same file. A DHT is basically a giant dictionary mapping keys to values, spread out over all nodes participating in the DHT network. In the case of BitTorrent, the keys are hashes related to the torrent, and the values are the whereabouts of peers interested in the contents of that torrent. All nodes connected to the DHT store a small subset of the keys, and the DHT network automatically decides which node is responsible for storing which item. The exact mechanism is based on the Kademlia system, as described in this paper.

It turns out the BitTorrent DHT (which is called ‘Mainline’) has recently been extended to also support storage of custom data that is not related to BitTorrent. Clients that implement BEP44 (where BEP stands for ‘Bittorrent Extension Proposal’) will accept allows storing data that is not related to BitTorrent at all. Such clients will accept custom values of at most 1000 bytes, which will be retained in the network for at least two hours. There are two types of custom data that can be stored following BEP44:

- Immutable data. Immutable data are stored in the hash table under the key that is equal to the data hashed with SHA1. As changing the data will inevitably change the hash, it cannot be changed without changing the key as well (effectively making it a different value).

- Mutable data. In order to store mutable data in the network, a client must first generate what is called a ‘keypair’. A keypair consists of a public and a private key. You can then use your private key to ‘sign’ data so that a receiver, who knows your public key, can verify that the data was actually signed by the private key belonging to the public key. This is important, because it allows the DHT to ensure that only the creator of the original data can change it. The algorithm used by the Mainline DHT is called ed25519-supercop.After generating the keypair, you add a ‘sequence number’ to the data, sign it and then submit it for storage in the DHT. The network will accept packets signed by a particular public key to be stored at the key equal to the SHA1 hash of that public key, if no data has been stored yet, or if the sequence number of the data being received is higher than the sequence number of the data already stored. Note that after updating mutable data, older versions of the data may still be in the network (for at most two hours).

The DHT can be used to store and retrieve mutable sensor data – each sensor is identified by a public key. If the client knows the public key, it can easily find the sensor data in the DHT (remember that it just needs to request the data associated with the key equal to the SHA1-hash of the public key). Interacting with the Mainline DHT turns out to be surprisingly easy with this Node.JS module.

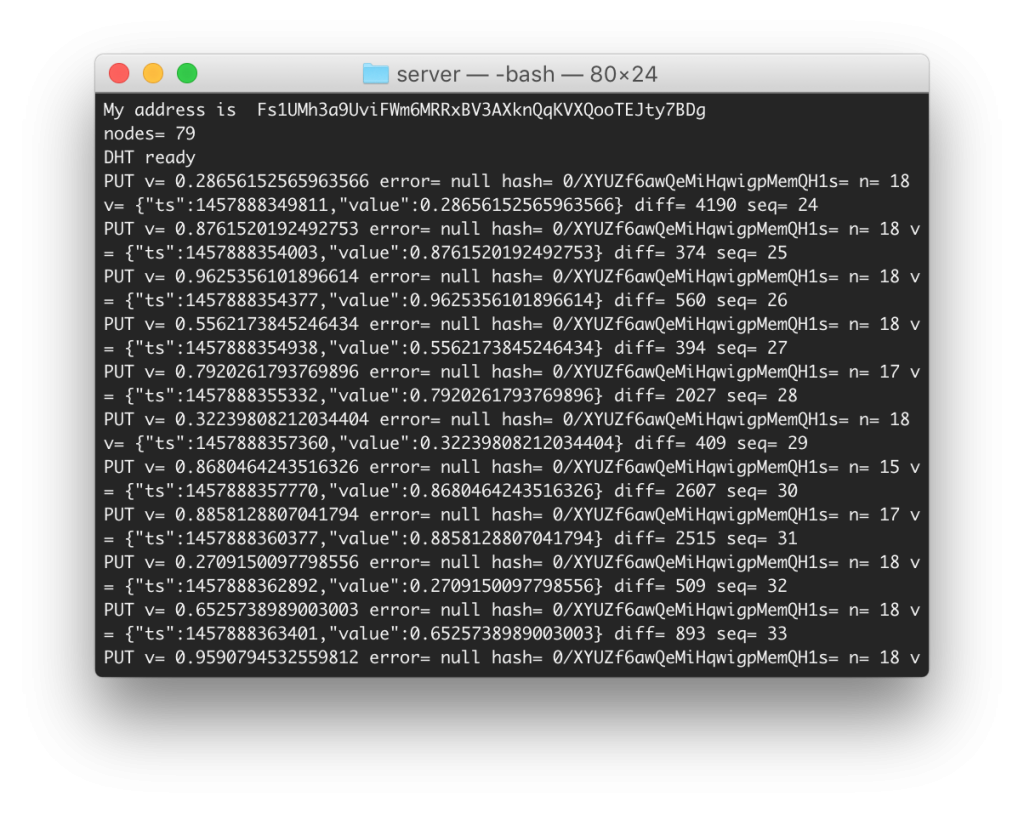

We implemented a proof of concept we like to call the DHToT (Distributed Hash Table of Things). The screenshot below shows the sender side, submitting sensor values obtained from a smart meter interfaced over a serial interface. The sender side submits a value each time it receives a sample from the smart meter.

Note that all that is needed to obtain data from this sensor is knowing the public key. No need to fuss with routers, ports or firewalls; the DHT ‘just works’ (if it isn’t actively blocked, that is – in some places it is because it is used by BitTorrent).

So how fast is the DHToT? It turns out writing to the DHT takes about 2.5 seconds on average – the sensor side (which was behind an NAT router) had about 70 DHT nodes on its ‘shortlist’ and reported having written the data to between 15-18 nodes after the 2.5 second period. We were subsequently able to fetch the data with a delay of another 2.5 seconds from a computer located on a completely different network. In general, the data fetched was current or 1-2 sequence IDs delayed.In some cases, we saw very ‘old’ packets being returned together with recent ones – this is most likely due to the fact that some clients will rate-limit the amount of requests and updates a single node performs in the DHT. In practice, it seems feasible to retrieve sensor data with a 15 second delay. Tracing the connected DHT node addresses confirmed that our data was actually being stored in different countries.

The DHToT seems a promising approach for communicating sensor data that is updated with an interval of between 15 seconds and 2 hours, with a maximum size of about 1000 bytes per data point (it is technically possible to store larger packets in the DHT by using multiple public keys, or by inserting ‘links’ to keys that store additional data). The DHT could also be used as a ‘rendezvous point’ for facilitating direct connections to a sensor (the DHT could store the latest reading and the whereabouts of the sensor as IP address and port). For applications where keeping history is important, an approach based on a blockchain (e.g. as used by Bitcoin) could also be of interest.